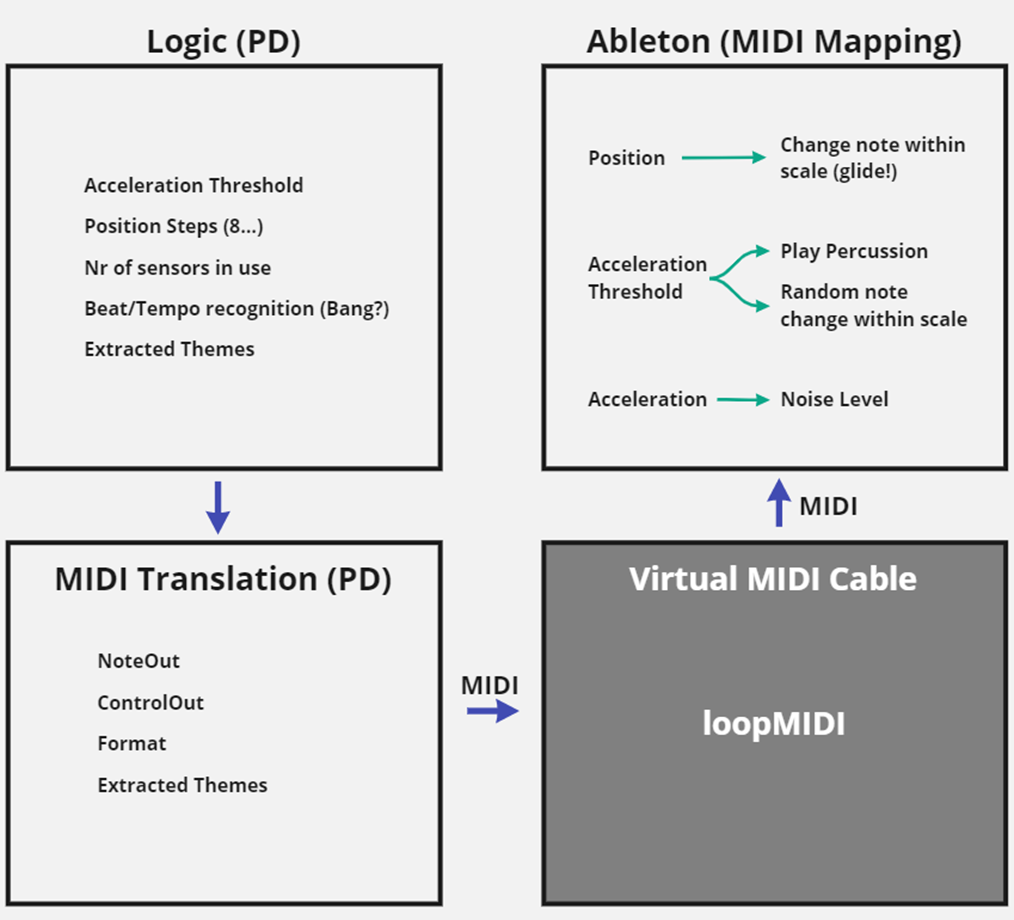

While the composition should of course always be in the back of my mind while developing the logic and mappings, the part in Ableton is where the music finally comes into play. I have not found a great workflow for creating such a composition yet, since I mostly end up changing mappings around, but I think when the time comes that I know my mappings quite well, the workflow also becomes a little easier and more intuitive.

I think that Ableton is a great choice for exactly this type of application because it was developed for live settings – which this installation also belongs to. Ableton’s session view is arranged in clips and samples that can be looped and will always play in sync, without an ending time. I am mostly playing all tracks at once and use the MIDI parameters to turn up the volume of certain tracks at certain points.

Composing for this format reminds me a lot of what I know about game sound design, since the biggest challenge seems to be to create a composition that consists of parts that might play together in ways one can barely anticipate, while it should still always sound good and consistent.

For the proof-of-concept composition, I used some stereo atmosphere I recorded myself and adjusted the width of it depending on the dispersion of the particles. A pad with many voices was also always running in the back, with slight pitch adjustments depending on the particle average position, as well as a low pass filter that opens when one’s arm is lifted up and closes when it is pointing downwards. With each soft movement, a kind of whistling sound is played, that is also modulated in pitch, depending on the arms position (up/down). This whistling sound also gets sent into a delay with high feedback, making it one of the only sounds at that level that reveals the BPM. As soon as the second level is reached, a hi-hat with a phasor effect on it starts playing, bringing a bit more of a beat into the soundscape. The phasor is controlled by the average velocity of the particles. The pattern of the hi-hat changes with a five-percent chance on every hard movement. The third level slightly changes the pad to be more melodic and introduces a kick and different hi-hat patterns. All in all, the proof-of-concept composition was very fun to do and worked a lot better than expected. I am looking forward to spending more time composing next semester.