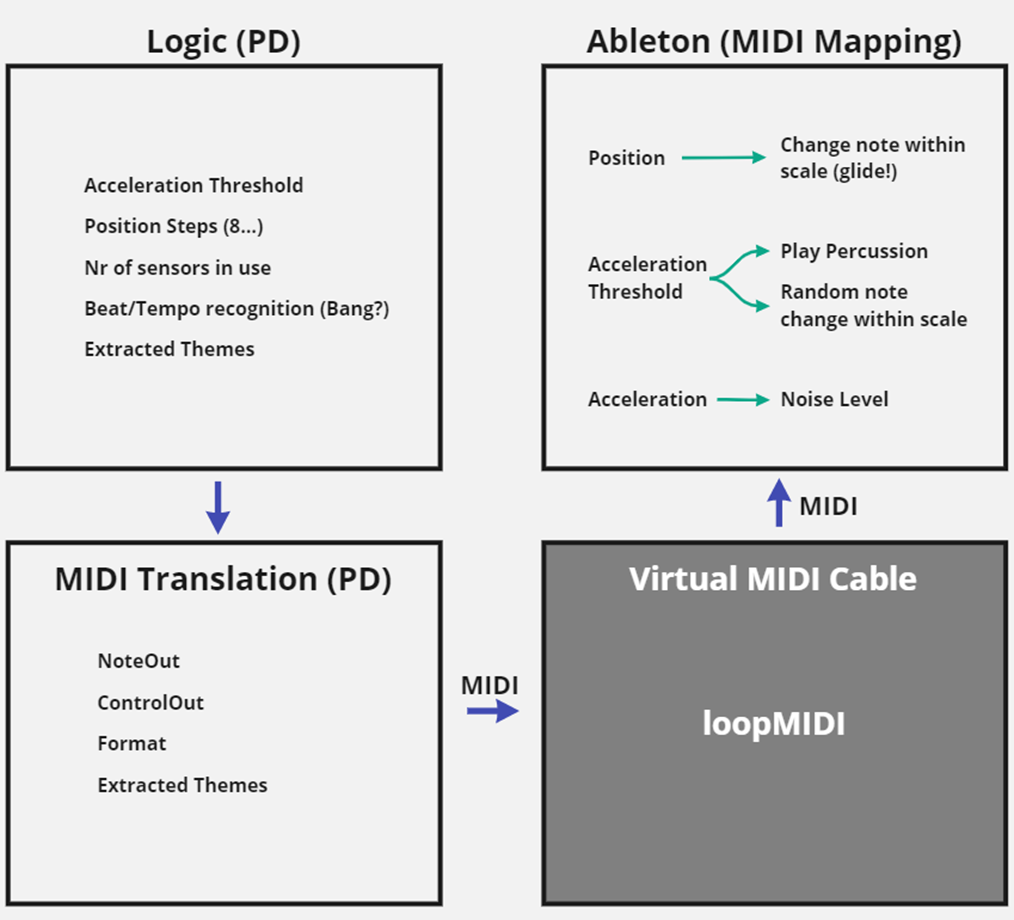

Before I write about the core logic part, I would like to lay out how the communication between Pure Data and Ableton looks like. Originally, I had planned to create a VST from my Pure Data patch, meaning that the VST would just run within Ableton and there is no need to open Pure Data itself anymore. This also gave me the idea to create a visualization that provides basic control and monitoring capabilities and lets me easily change thresholds and parameters. I spent some time researching possibilities and found out about the Camomile package, which wraps Pure Data patches into VSTs, with visualization capabilities based on the JUCE library.

However, it did not take long until I found several issues with my communication concept: First off, the inputs of the Expert Sleepers audio interface need to be used, while any other interface’s (or the computer’s sound card’s) outputs must be active, which is currently not possible natively in Ableton for Windows. There would be a workaround using ASIO4ALL, but that was not a preferred solution. Furthermore, a VST always implies that the audio signal flows through it, which I did not really want – I only needed to modulate parameters in Ableton with my Pure Data patch and not have an audio stream flowing through Pure Data, since that would give me even more audio interface issues. This led me to move away from the VST idea and investigate different communication methods. There were two more obvious possibilities, the OSC protocol and MIDI. The choice was made quite quickly since I figured that the default MIDI resolution of 128 was enough for my purpose and MIDI is much more easily integrated into Ableton. Now with that decision made, it was a lot clearer what exactly the core logic part needs to entail.