Software

The software approach in the experiment phase required a little more pre-processing steps than the approach in the final phase but was very similar in all other ways. Firstly, the wristband sensor data was read in and processed in Pure Data. With this data, modulators and control variables were created and adjusted. Those variables were used in Ableton (Session View) to modulate and change the soundscape. Therefore, during the composition, Ableton was where sound were played and synthesized, whereas Pure Data handled the sensor input and converted it into logical modulator variables that controlled the soundscape over short- and long-term. This communication approach stayed the same in the later phase. In the following paragraphs, I will describe the parts that differed from the final software approach.

Data Preparation

The data preparation was probably the biggest difference compared to the new system, since I was basically working with analog data in the experiment phase, making a thorough cleaning necessary to reach the needed accuracy. The wristband data I received in Pure Data was subject to various physical influences like gravity, electronic inaccuracies and interferences and simple losses or transmission problems between the different modules. At the same time quite accurate responses on movements were needed. Furthermore, the rotation data included a jump from 1 to 0 each full rotation (like it would jump from 360 to 0 degrees) which also needed to be translated into a smooth signal without jumps (transformation with help of a sine signal was very helpful here). The signal bounds (0 and 1) of the received values were also rarely fully reached, requiring a solution on how to reliably achieve the same results with different sensors at different times. I developed a principle of using the min/max values of the raw data and stretching that range to 0 to 1. This meant that after switching the system on, each sensor needs to be rotated in all directions for the Pure Data patch to “learn” its bounds. I decided that there is no real advantage in using the acceleration values of the individual axes, but only the total acceleration (using Pythagorean triples).

Mapping & Communication Method

Originally, I had planned to create a VST from my Pure Data patch, meaning that the VST would just run within Ableton and there is no need to open Pure Data itself anymore. This also gave me the idea to create a visualization that provides basic control and monitoring capabilities and lets me easily change thresholds and parameters. I spent some time researching possibilities and found out about the Camomile package, which wraps Pure Data patches into VSTs, with visualization capabilities based on the JUCE library.

However, there were several issues with my communication concept: First off, the inputs of the Expert Sleepers audio interface need to be used, while any other interface’s (or the computer’s sound card’s) outputs must be active, which is currently not possible natively in Ableton for Windows. There would be a workaround using ASIO4ALL, but that was not a preferred solution. Secondly, a VST always implies that the audio signal flows through it, which was not really wanted – there was only a need to modulate parameters in Ableton with my Pure Data patch and not have an audio stream flowing through Pure Data, since that might create even more audio interface issues.

This led to the investigation of different communication methods. There were two more obvious possibilities, the OSC protocol and MIDI. The choice went to MIDI, since the default MIDI resolution of 128 was enough for this purpose and MIDI was much more easily integrated into Ableton.

Core Logic

There were several differences in the way the logic was built in the experiment and product phase, but besides very few, I would rather see the differences as an evolvement and learning process. In the experiment phase, a lot more functionality was ‘hard coded’, like different threshold modules, that I called ‘hard’ and ‘soft’ movements.

An important development for the whole project was the creation of levels based on the listeners’ activity. It outlines a tool that I consider essential for a composition that can match its listeners’ energy, and a crucial means for storytelling. All movement activity is constantly written into a 30-second ring buffer and the percentage of activity within the ring buffer is calculated. If the activity percentage within 30 seconds crosses a threshold (e.g., around 40%), the next level is reached. If the activity level is below a threshold for long enough, the previous level becomes active again.

While using all those mechanisms introduced a certain level of interactive complexity, the mappings posed the risk to get monotonous and boring over time. To remedy that issue, I got the suggestion to use particle systems inside my core logic to create more variation and interesting mapping variables. The particle system ran inside the GEM engine, as part of the GEM Pure Data external. Setting the particles’ three-dimensional spawn area to move depending on the rotation of the three axes of the wristband brought up an interesting system, especially in combination with movement of the orbital point of the particles. Mappable variables were then created by using parameters of statistical nature: The average X, Y and Z position of each particle, the average velocity of all particles and the dispersion from the average position. While those variables worked well in a mapping context, I decided not to use them in the next project phase, because I adjusted my workflow to using variables mainly for direct and perceptible mappings, while implementing random and arbitrary elements within Ableton.

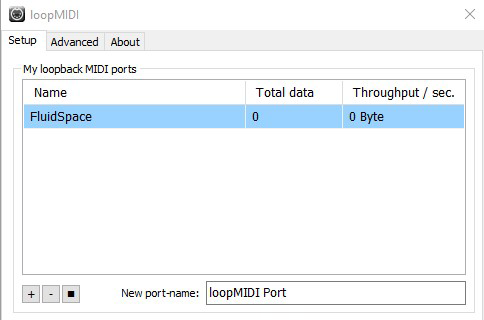

MIDI

To be able to send MIDI data to Ableton, there is a need to run a virtual MIDI cable. For this project, I was using the freeware “loopMIDI”. This acts as a MIDI device that I can configure in Pure Data’s settings as MIDI output device and in Ableton as MIDI input device.

In Pure Data, I need to prepare data to be in the right format (0 to 127) to be sent via MIDI and then simply use a block that sends it to the MIDI device, specifying value, device, and channel numbers. I am mainly using the “ctlout” and the “noteout” block.

In Ableton, there is a mapping mode that lets one map almost every changeable parameter to a MIDI pendant. As soon as I turn on the mapping mode, I can just select any parameter that I want to map, and it will map it directly to the next MIDI input it receives. It will immediately show up in my mapping table with the option to change the mapping range.