Introduction

The introduction of digital musical instruments (DMIs) has removed the need for the existence of a physically resonating body in order to create music, leaving the practice of sound- making often decoupled from the resulting sound. The inclination towards smooth and seamless interaction in the creation of new DMIs has led to the development of musical instruments and interfaces for which no significant transfer of energy is required to play them. Other than structural boundaries, such systems usually lack any form of physical resistance, whereas the production of sounds through traditional instruments happens precisely at the meeting of the performer’s body with the instrument’s resistance: “When the intentions of a musician meet with a body that resists them, friction between the two bodies causes sound to emerge. Haptic controllers offer the ability to engage with digital music in a tangible way.

Background

Dynamic relationships occur and are ongoing between the performer, the instrument, and the sounds produced when playing musical instruments. These exchanges depend upon the sensory feedback provided by the instrument in the forms of auditory, visual, and haptic feedback. Because digital interfaces based around an ergonomic HCI model are generally designed to eliminate friction altogether, the tac- tile experience of creating a sound is reduced. Even though digital interfaces are material tools, the feeling of pressing a button or moving a slider does not provide the performer with much physical resistance, whereas the engagement required to play an acoustic instrument provides musicians with a wider range of haptic feedback involving both cutaneous and proprioceptive information, as well as information about the quality of an occurring sound. This issue is recognized in Claude Cadoz’s work regarding his concept of ergoticity as the physical exchange of energy between performer, instrument, and environment. A possible solution to these issues is the use of haptic controllers. As has been previously noted, “we are no longer dealing with the physical vibrations of strings, tubes and solid bodies as the sound source, but rather with the impalpable numerical streams of digital signal processing”. In physically realizing the immaterial, the design of the force profile is crucial because it determines the overall characteristics of the instrument.

My Conclusion

Haptic Feedback of Cross-Modal Terrains in combination with Sonic Sound is a very interesting way to experience a feel of terrains. Experience structure of surface imperfections are the next step to make an impact and improve the experience of Cross-Modal Terrains.

Furthermore the technology already has achieved the Sonic Sound Haptic feedback though ultrasonic waves. For example the company Ultraleap with STRATOS Inspire where soundwaves a concentrated into on point. This will be an interesting approach for testing the haptic feedback in modern technology and might be a more detailed response.

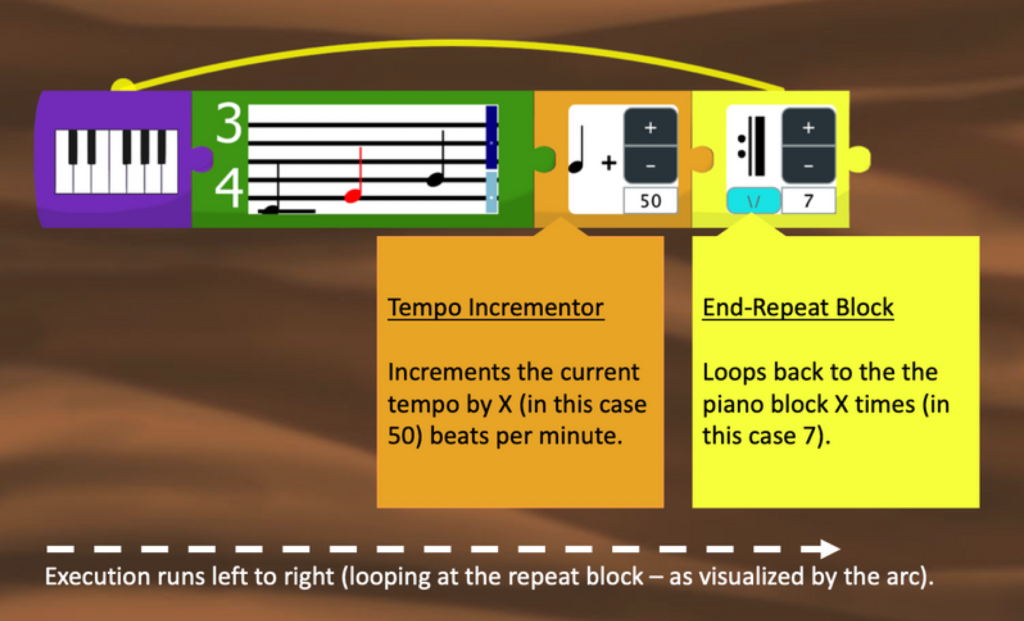

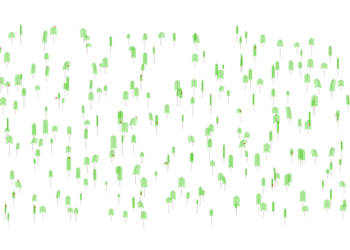

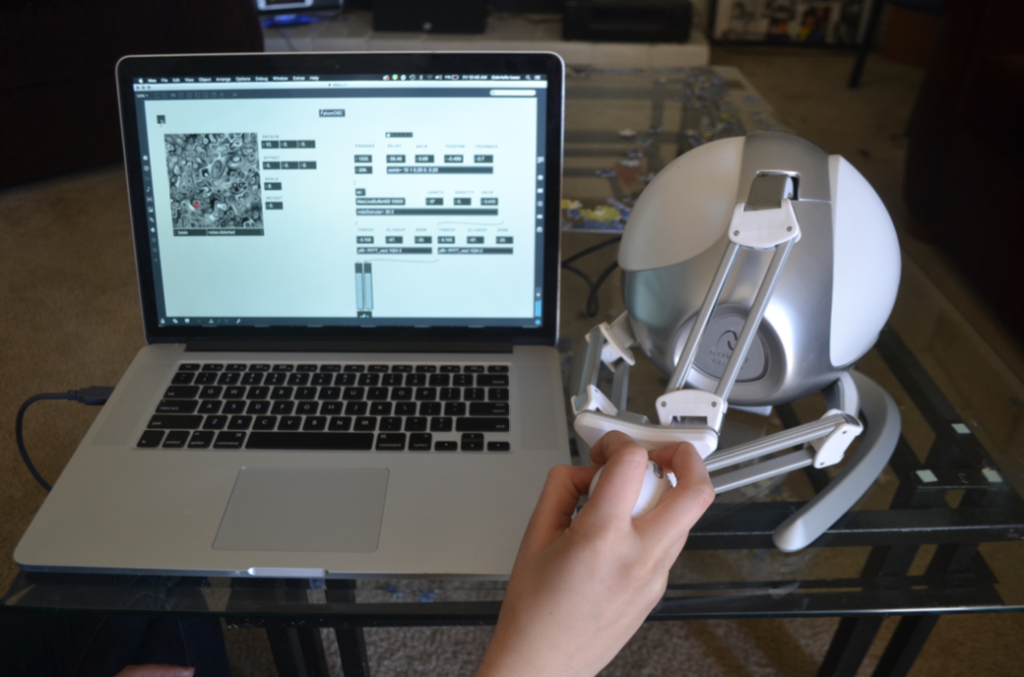

The way they used Max8 for there testing was a good choice due to having a good connection with devices and monitoring values and the images which is provided as a greyscale 2D image. There it is very simple to see and detect where we are on the surface and how intense it is to feel.

–

Isaac, Gabriella; Hayes, Lauren; Ingalls, Todd (2017). Cross-Modal Terrains: Navigating Sonic Space through Haptic Feedback https://zenodo.org/record/1176163