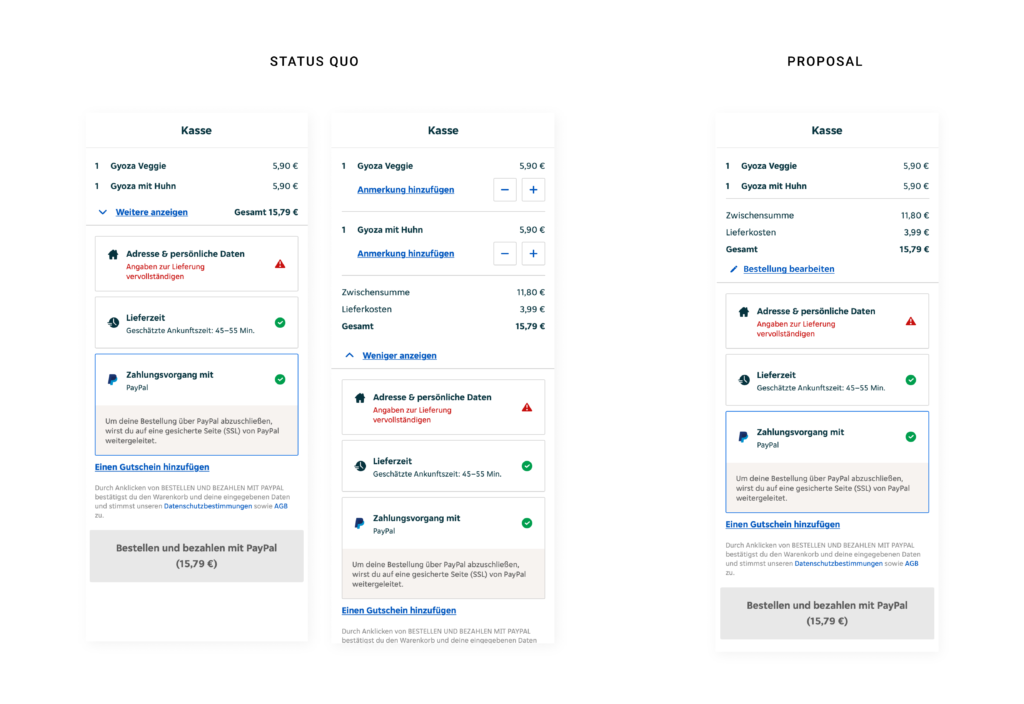

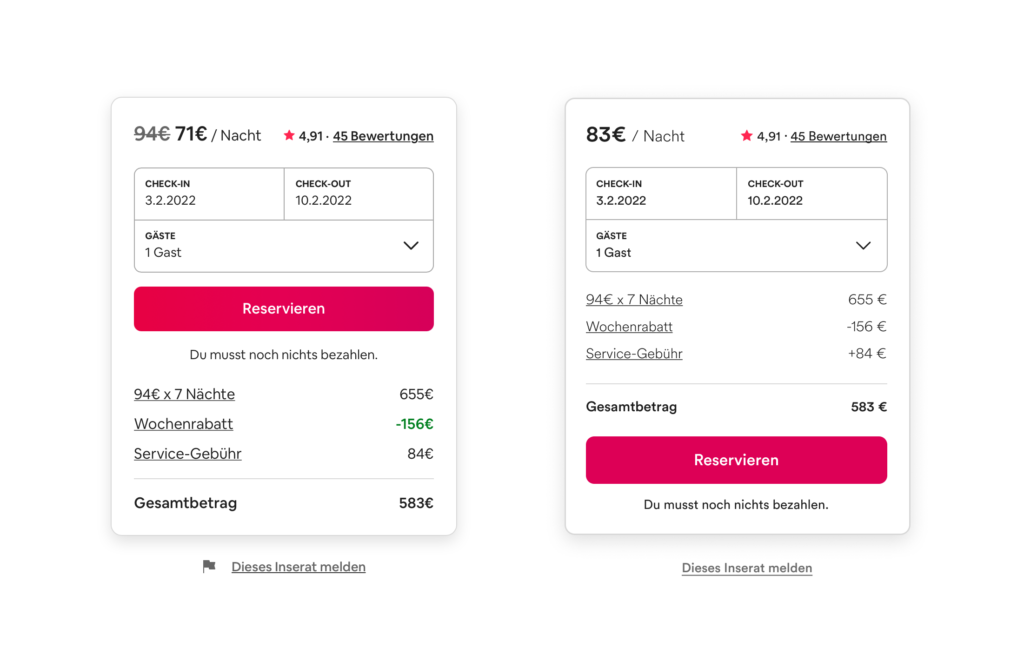

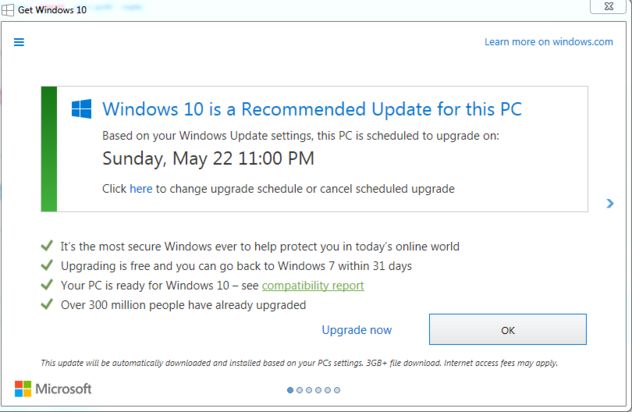

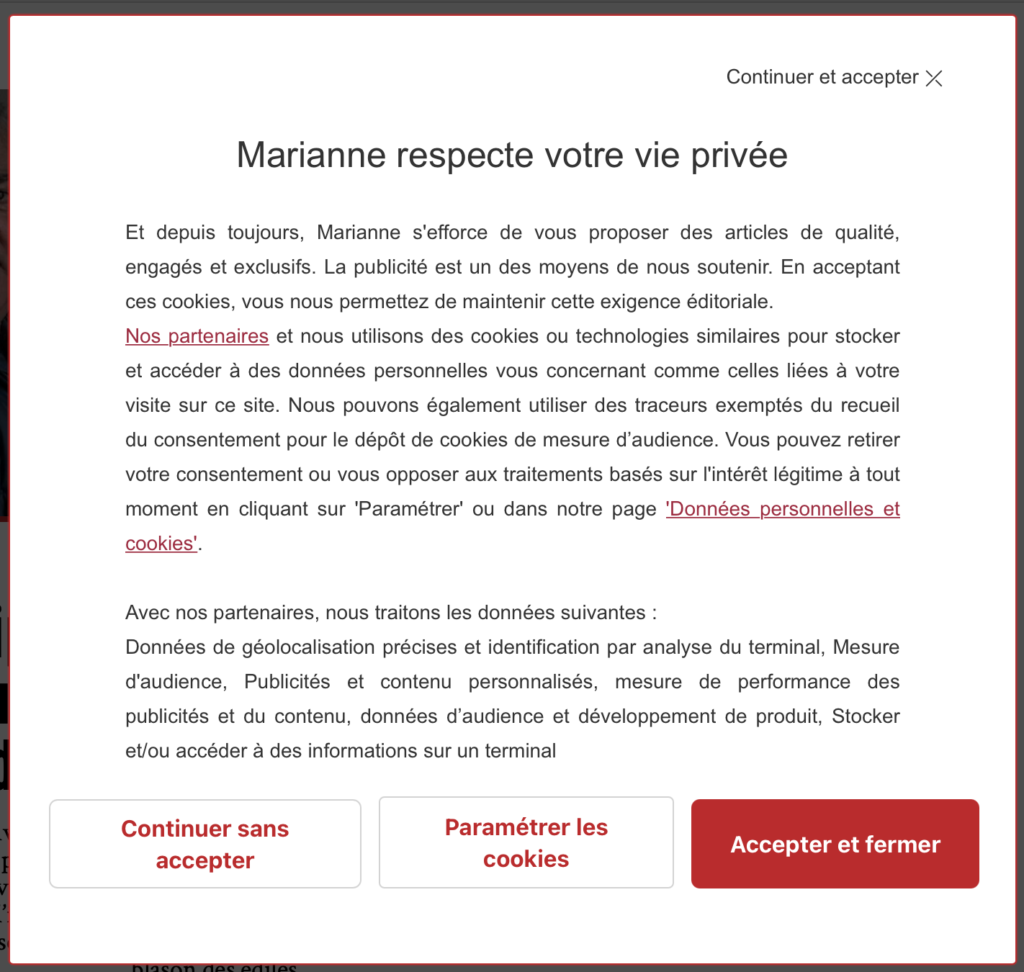

The foundation for the starting point of the work is the “Dark patterns Taxonomy”, developed by Harry Brignull, which provides a detailed classification of different types. (Brignull, 2011) Additionally, there are several explanatory approaches or theories that have been proposed to explain the use of dark patterns in user interface design. One theory is that dark patterns are the result of a conflict between the interests of the designers, who are often motivated by profit or other commercial goals, and the interests of the users, who may not be aware of the manipulation. This theory suggests that designers use dark patterns because they are more effective at achieving their goals than other, more transparent methods of persuasion. (Fogg, 2003, p. 16)

Another theory is that dark patterns are the result of a lack of ethical awareness or consideration on the part of designers. This theory suggests that designers may not be intentionally trying to deceive or manipulate users, but rather they may be unaware of the potential negative consequences of their design choices.

There has been much debate in the field of human-computer interaction (HCI) about the ethical implications of dark patterns and the role of designers in promoting or preventing their use. Some HCI researchers argue that designers have a responsibility to consider the ethical implications of their work, and to design interfaces that respect the autonomy and well-being of users. (Harris & Light, 2012, p. 51) Others argue that it is not the role of designers to dictate user behavior, and that users should be empowered to make their own decisions about how to interact with technology.

There are several principles that have been proposed to guide the ethical design of user interfaces, including transparency, fairness, choice, and respect for user autonomy. These principles can help designers to create interfaces that are more transparent and less manipulative, and that give users more control over their interactions with technology.

Persuasion is the act of influencing someone’s beliefs, attitudes, or behaviors through communication. It is a common goal of marketing and advertising and is often achieved by various techniques such as appeals to emotion, appeals to authority, and framing. In the context of dark patterns, persuasion is used to manipulate users into performing actions that they might not otherwise perform and is often achieved through deceptive or manipulative techniques. (Hassenzahl & Tractinsky, 2006, p. 92)

Sources:

Brignull, Harry. ‘Dark Patterns: Deception vs. Honesty in UI Design’. A List Apart (blog), 1 November 2011. http://alistapart.com/article/dark-patterns-deception-vs.-honesty-in-ui-design/.

Fogg, B. J. ‘Persuasive Technology: Using Computers to Change What We Think and Do’. Ubiquity 2002, no. December (December 2002): 2. https://doi.org/10.1145/764008.763957.

Harris, J, and B Light. 2012. “Ethical Design and the Responsibility of HCI.” Interactions 19 (5): 50-53.

Hassenzahl, M, and N Tractinsky. 2006. “User Experience – A Research Agenda.” Behaviour & Information Technology 25 (2): 91-97.