| a final summary of the ideations about my first master thesis topic option.

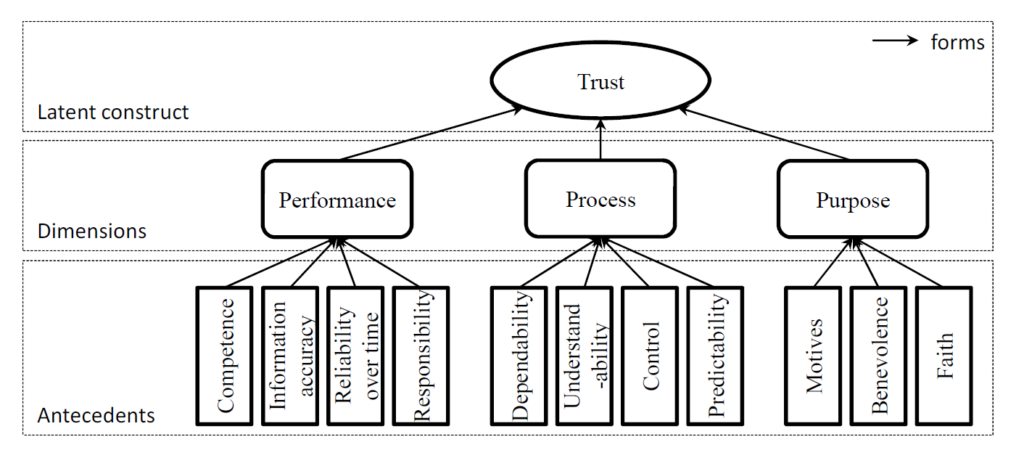

During this third semester of my studies, I researched different aspects of the topic around Trust in In-Vehicle Driver Assistant Systems. I formulated the following first proposal, to summerize the direction:

Working Title: UI design solutions for trust in driver assistance systems

Advanced driver assistance systems (ADAS) are broadly present in today’s vehicles. Their contribution to driving could possibly increase in the future until reaching full autonomy, but only if the drivers are willing to let them take over control. Trust in such safety-critical automated systems is key to their acceptance and use. The user interface design of ADAS should be intuitive and suitable for all users to ensure comfort and prevent doubting or misinterpreting the system’s actions.

With my master thesis, I am aiming to explore the communication and interaction elements of ADAS user interfaces, how can they be intuitive and individually suitable for different user needs. After a theoretical research and analysis of the existing systems, I want to carry out user tests comparing different design solutions to answer following questions:

- Which properties of ADAS user interfaces are neccessary for users’ trust?

- Which UI elements could and should be individualized?

- How can a framework for individualizing ADAS UIs look like to maximize users’ trust in the system?

Further work was put into formulating the exposé of the thesis. Within the exposé, the following main and sub-questions were defined:

Main question:

What should a framework for individualizing ADAS UIs contain to maximize users’ trust in the system?

Sub-questions:

- Which properties of ADAS user interfaces are necessary for users’ trust?

- Which factors influence the users’ mental models of the system?

- Which UI elements could and should be individualized?

- Which control possibilities are necessary in autonomous driving mode for maintaining trust?

After discussions and feedback from an expert in this field, I looked further for a more specific focus points, that could distinguish the master thesis more from the existing research.

I came up with following areas to look into and asked the help of OpenAI [1] to get more insights into the specific topics, with the aim of better understanding the backgrounds and having more foundation to choose the right topic for the thesis.

- Locus of control / UI control systems design >> which control options are essential in autonomous driving to ensure occupant comfort?

The degree to which a person feels they have influence over how things turn out in their life is referred to as locus of control. [2] The locus of control in the context of autonomous driving refers to the control options available to the passengers and their perception of their level of control over the vehicle’s operation.

It is crucial to offer a variety of control options that give passengers a sense of control over the operation of the vehicle in order to assure occupant comfort in autonomous driving.

This can include controls for changing the vehicle’s speed and direction, regulating the interior environment (such as the entertainment systems and climate control), and giving feedback and information on how the vehicle is operating and the surrounding traffic conditions.

Striking a balance between giving people control and preserving the safety and dependability of the vehicle is an important factor to take into account when designing control options for autonomous vehicles. For instance, having too much control over how the car operates could make it more likely to have accidents or malfunctions, while having too little control could make the occupants feel uncomfortable and powerless.

It’s crucial to take into account the various preferences and expectations of various occupant groups when designing control options in order to guarantee occupant comfort and satisfaction. This can entail offering a variety of control options, letting users customize their control preferences, and giving consumers clear, understandable feedback on how the vehicle is doing.

- Antropomorphism >> Individualization options when the vehicle becomes “Alexa” and has a personality

Antropomorphism is the process of giving non-human creatures, such as objects, animals, or technology, human-like traits. [3] Antropomorphism can be employed in the context of in-car driver assistance systems to make the vehicle feel more human-like and unique to the driver, which can then improve trust in the technology.

The availability of personalization options can significantly contribute to the antropomorphism of in-car driver assistance systems. For instance, a car can resemble a personal assistant or friend more if its voice and personality can be customized by the driver.

The implementation of AI would be the next step, where the system would learn about the drivers or occupants habits and preferences and adapt itself to their needs.

- XAI – explainable AI >> UI interfaces and information about the vehicle’s AI system

Explainable AI (XAI) is a field of research that aims to make AI systems more transparent and interpretable, so that their behavior can be understood and trusted by users. If AI systems are applied in vehicles, their understanding by the user would be highly important, supported by the user interface. The UI design therefor can be a crucial challenge from this point of view as well.

After discussing these topics with other experts, questions came up about the influence of cultural differences on the perception and trust in automated systems. There can be factors like cultural norms, values, beliefs and attitudes towards technology that play a role, but also the preferences in receiving information – more shorter and straightforward or more detailed explanations. Cultural psychology would be an area to dig deeper for insights on different perceptions. Besides that, the topic of individualizing the user interfaces appear highly relevant and necessary in this respect as well again.

After these thoughts, I came up with following summarizing problem statement for the thesis:

“How to design anthropomorphic user interfaces for autonomous vehicles that are sensitive to cultural and individual differences in drivers’ preferences and expectations for anthropomorphic design, and support personalization and inclusive user experiences?”

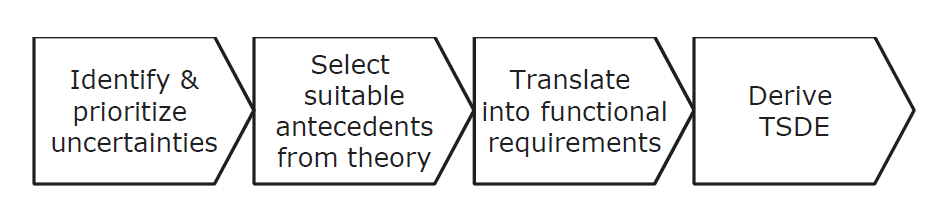

To create a thesis work on this question statement, I applied the steps of Human-Centered Design (HCD): research, ideate, prototype, test – that could be implemented in following way:

- Research to understand the cultural and individual differences in drivers’ preferences and expectations for anthropomorphic design

- Generate ideas for how to design an anthropomorphic UI that is sensitive to cultural and individual differences

- Build a prototype out of the ideated solutions

- User testing with drivers from different cultural backgrounds

- With the help of the testing results, refine the concept

- Ideally, test the refined prototype again to gain insights on the refinement

- Document the results

As these steps would not suffice completely as a thesis content, I searched for other methods to combine the HCD approach with. Finally I stumbled upon the Design Science Research method [4], that can complement the HCD steps with the problem definition phase.

By combining the two methods, I could define possible chapters / parts of the thesis in following order:

- Introduction: Outlining the research question, discussion of importance and backgrounds.

- Literature review: Review of existing literature on the topic of designing anthropomorphic UIs for autonomous vehicles, and the challenges and opportunities involved in designing UIs that are sensitive to cultural differences. Also including relevant design methodologies, such as Human-Centered Design (HCD) and Design Science Research (DSR), as well as specific challenges and considerations related to designing UIs for autonomous vehicles.

- Research methods: Description of the research methods used to gather insights into drivers’ preferences and expectations for anthropomorphic design – interviews and surveys.

- Results: Presentation of the results and interesting insights from the research.

- Design concepts: Description of the design concepts developed based on the research and the principles of HCD and DSR.

- Implementation and testing: Process of prototyping and testing the design concepts, including challenges or obstacles and how they were overcome.

- Evaluation and refinement: Discussing the results of the testing and evaluation, and description of how this information was used to refine and improve the design.

- Conclusions and future work: Summary of key findings, implications of the work. Outline of potential avenues for future research on this topic.

A timetable and preliminary bibliography were also put together for the exposé, but these are not relevant any more for the following reasons, so I won’t include them in this post.

I approached some companies with my master thesis proposal to ask for a collaboration / support / employement with this topic, yet didn’t receive any positive offer. After assessing my possibilities and the necessity of industrial support and insights on the state-of-the-art development to be able to create useful results that are not outdated already, I came to the decision not to continue with this topic as my master thesis.

I found a similarly interesting research project at the university to write my thesis on, so I am not sad about the decision. But still I won’t loose interest in the ADAS UI development and the topic of trust, individualisation, antropomorphism and cultural psychology. I hope to be able to dig into these areas later on during my career as a UX designer and automotive engineer.

Sources:

[1] ChatGPT by OpenAI – https://chat.openai.com/chat

[2] Locus of control – Wikipedia article. Last opened on 06.02.2023 https://en.wikipedia.org/wiki/Locus_of_control

[3] Antropomorphism – Wikipedia article. Last opened on 06.02.2023 https://en.wikipedia.org/wiki/Anthropomorphism

[4] Design Science Research Methodologie – shribe.de, 09.03.2021. Last opened on 06.02.2023 https://shribe.de/design-science-research-methodologie/