By Beat Rossmy, Sebastian Unger, Alexander Wiethoff

https://nime.pubpub.org/pub/touchgrid/release/1

Musical Grid Interfaces are now used for around 15 years as a method to produce and perform music. The authors of this article made an approach to adapt this grid interface and extend it with another layer of interaction – with touch interaction.

Touch interactions can be divided into three different groups:

- Time based Gestures (bezel interactions, swiping, etc.)

- Static Gestures (hand postures, gestures, etc.)

- Expanded Input Vocabulary (using finger orientation towards the device surface)

During their experiments, they mainly focused on time based gestures and how they can be implemented in grid interfaces.

First Prototype

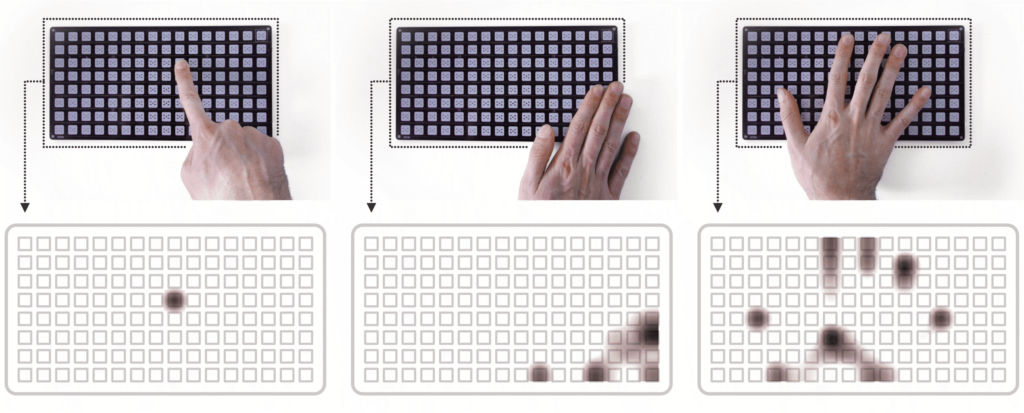

Their first prototype was build out of 16*8 grid PCB with 128 touch areas instead of buttons. This interface was able to record hand movements in a low solution, in order to detect static and time based gestures. But they had problems with the detection of fast hand movements and they could not solve them without having a major change in the hardware.

TouchGrid

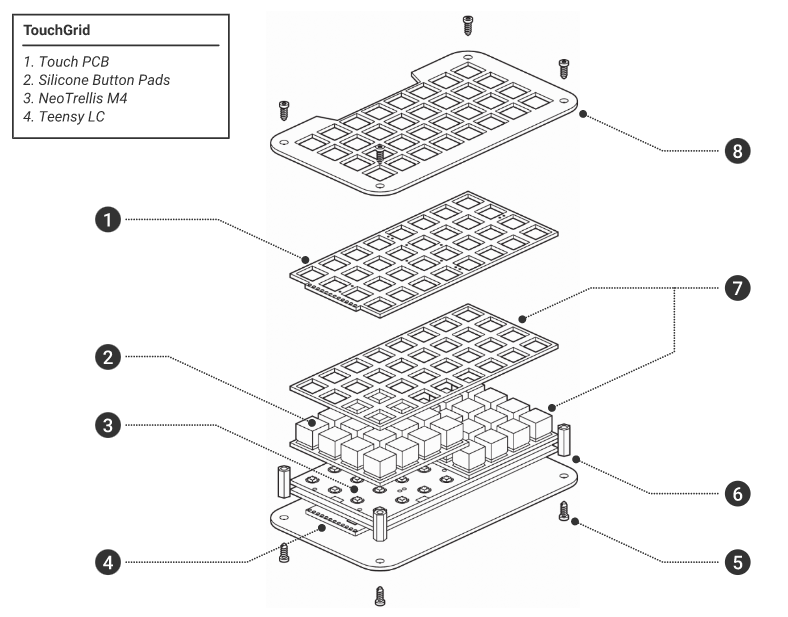

For their second prototype, they used a Adafruit NeoTrellis M4 consisting out of a 8*4 LED buttons, which are able to give RGB feedback.

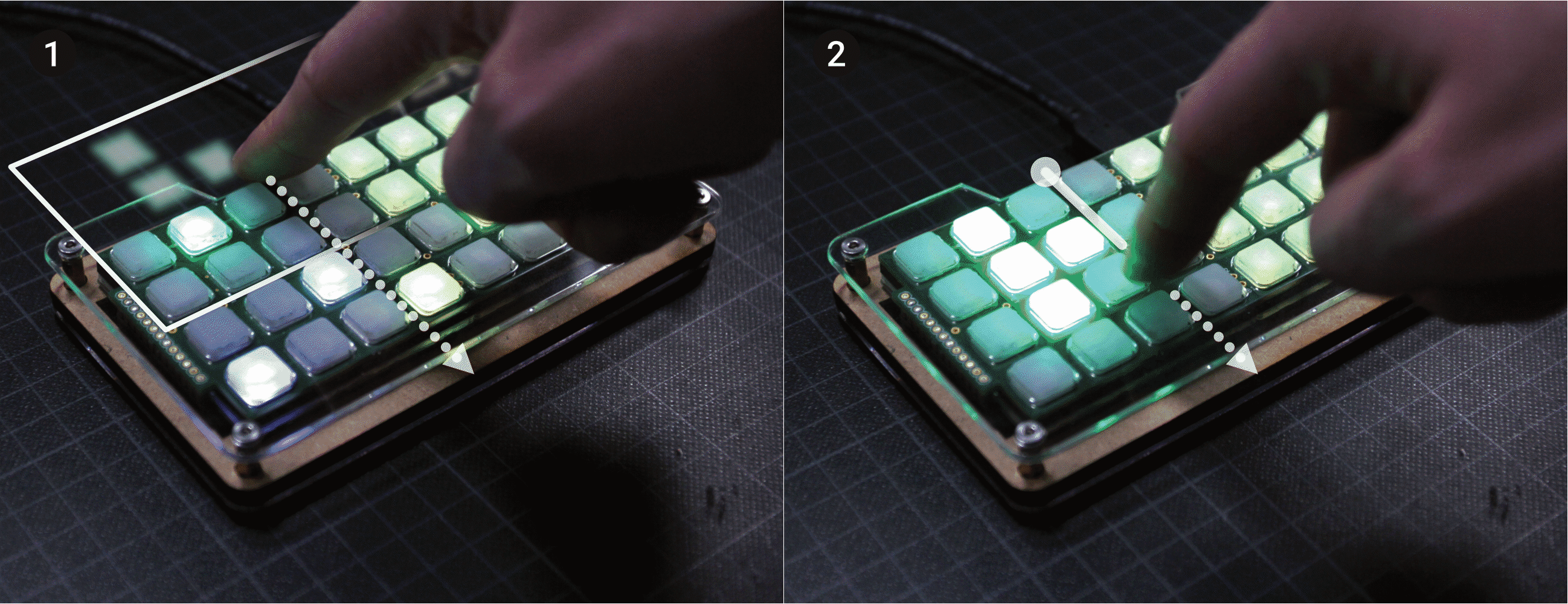

They managed to incorporate two time based interactions: Dragging from off-screen (accessing not frequently used features like menus) and horizontal swiping (switching linear arranged content). In order understand the different relationships and features and not overwhelm the users, they also incorporated animations.

Take a look at the video to get a better understanding of the functionalities:

Evaluation

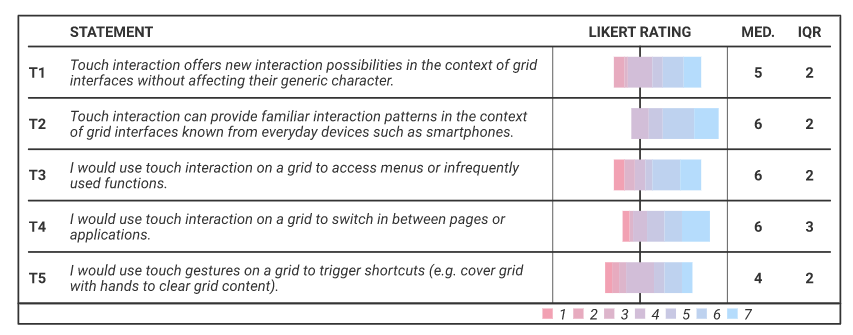

To evaluate their concept, they made an online survey with 26 participants, whom they showed the video above. Most of them stated that they are already familiar with touch interactions and that they can image to use this interface. They even came up with a few more ideas for touch interactions, like zooming in a sequence of data. When they were asked to state their concerns, they said for example that it might get a bit too complex and they feared the malfunction and interference with current button interactions.

Conclusion

With their concept, the authors took a different approach than a lot of other people: Instead of aiming to make touchscreens more tangible, they tried taking already known touch interactions and combine it with the tangible grid interfaces. They try to take the best out of best out of the two worlds and combine it with their TouchGrid. As for now, they are still in the concept phase, focusing on the technical proof of concept and are getting help from an expert group in the evaluation of their concept. For the future, they hope that they can further work on the “[…] development of touch resolution, with which more interactions can be detected and thus more expressive possibilities for manipulating and interacting with sounds in real time are available. Furthermore, combinations of touch interaction with simultaneous button presses are conceivable, opening up currently unconsidered interaction possibilities for future grid applications.”