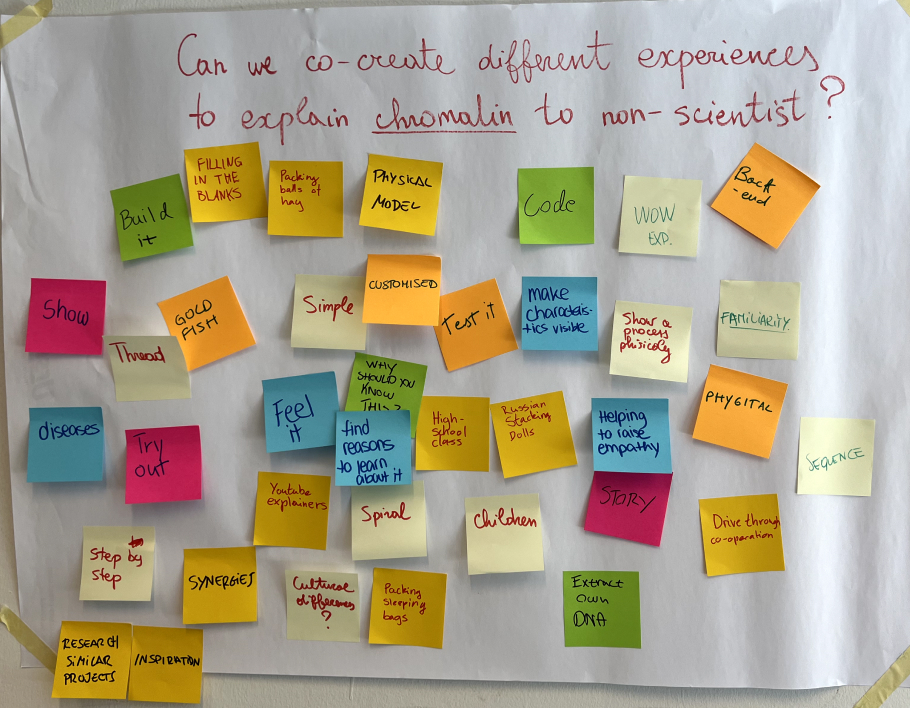

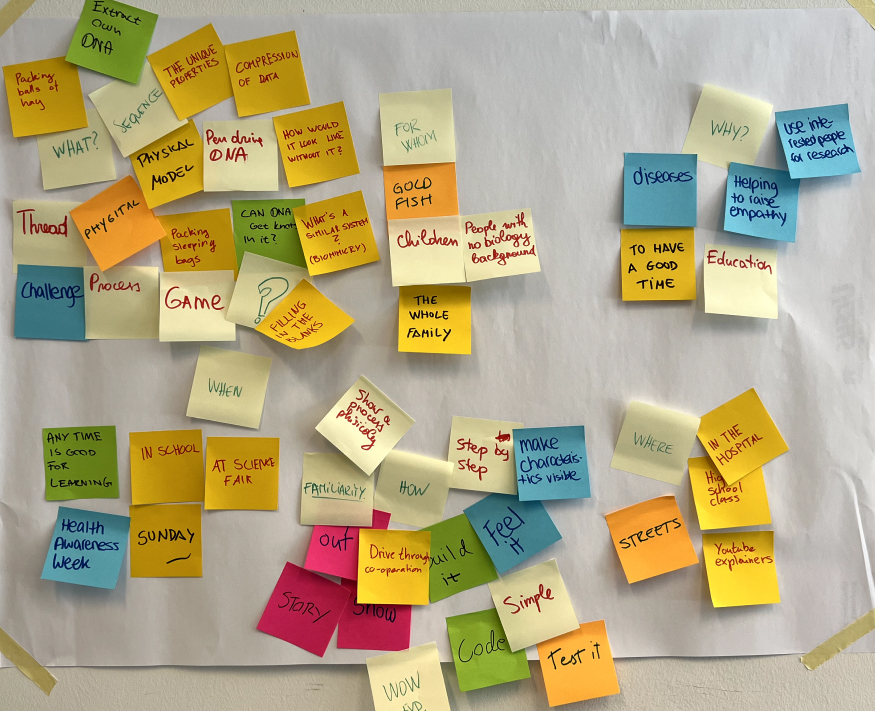

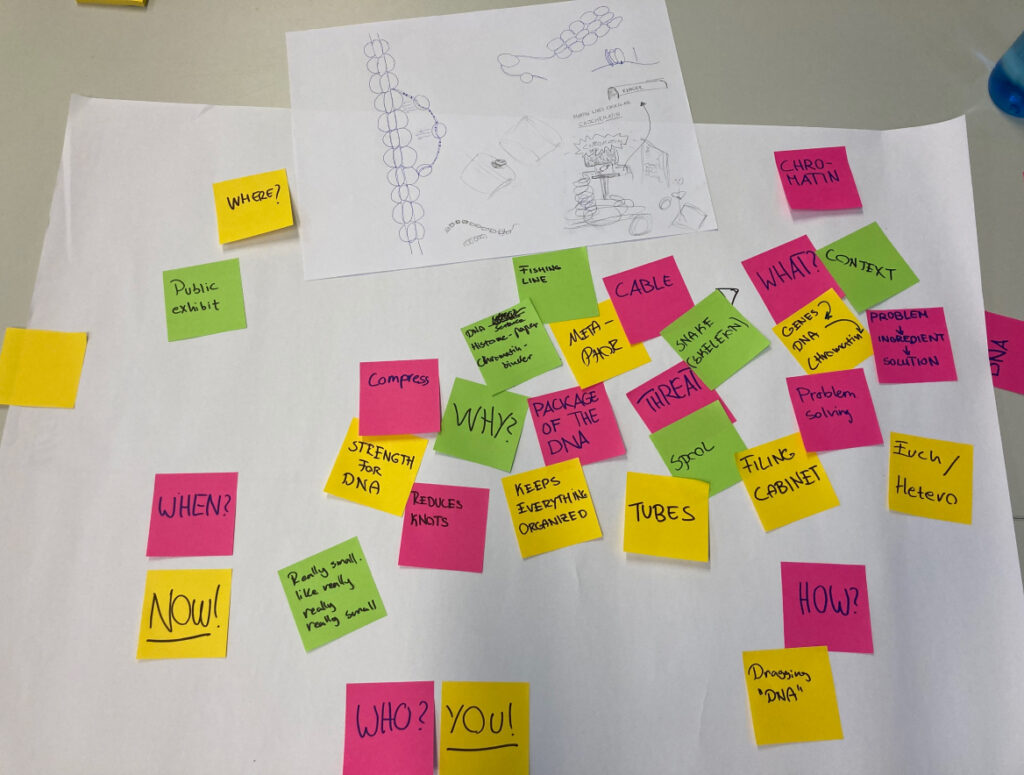

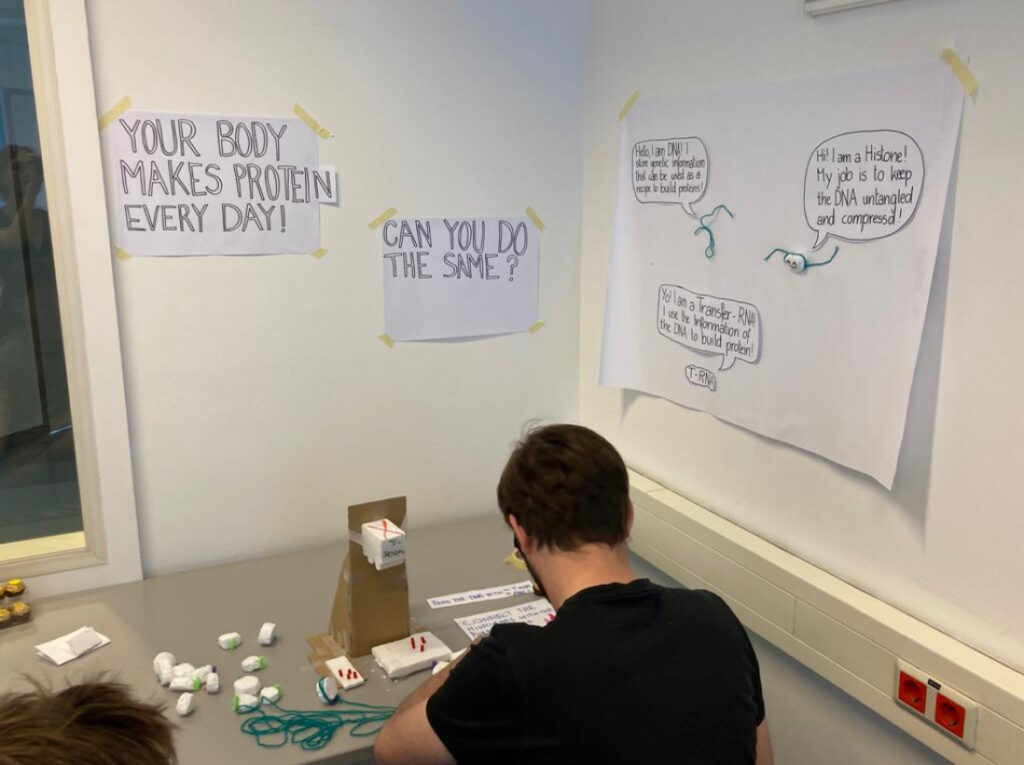

During the last semesters I have changed my research topics a lot of times, so I am really glad that I have finally found a topic which is absolutely interesting for me. The topic is interactive science communication. I got in first contact with it during the international week that took place last year in the FH. I visited a workshop with Carla Molins Pitarch which focussed on prototyping phygical experience in order to communicate scientific topics. I have not looked at interaction design in this way before and was really inspired by the workshop, so I have chosen to write my master thesis in this area as well.

(Interactive) Science Communication

Science communication aims to bring scientific topics and research findings to a broader audience in an accessible and understandable manner. To achieve this goal, various methods are used, including making scientific articles and journals freely available online, using interactive exhibits in museums and science centers, or creating online tools and resources. Despite the ongoing efforts, however, many scientific articles can still be difficult for non-scientists to understand. Interactive science communication could be one possible solution to this problem. By using interactive tools and techniques, complex scientific concepts can be made more accessible and understandable to the general public. This has numerous benefits, including promoting science literacy, enabling effective public engagement in scientific discussions and decision-making processes, and fostering a deeper understanding of science among the general public.

Interactive learning

As already mentioned before, scientific topics are often very complex and hard to understand for non-scientists. Providing an interactive experience could be beneficial for the understanding of the topic in my opinion. The benefits of interactive learning is that the users are becoming the center of the learning experience. They get to discover the topic in their one pace and through interactive storytelling are getting immersed into the topic. It is also proven that it is easier to learn for people when they are actively engaging with the topic. Therefore, providing gamified interactive elements with an overlaying story can really help the user to understand and memorize the topic. But the creation of such interactive experiences is also connected to a lot of expensed, which need to also be taken into account. Therefore, the thesis will also evaluate the benefits of interactive science communication with those expenses in mind.

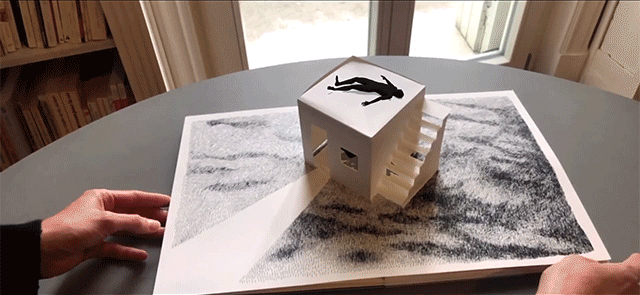

The practical work piece

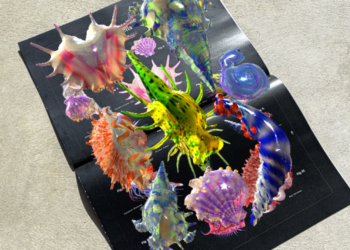

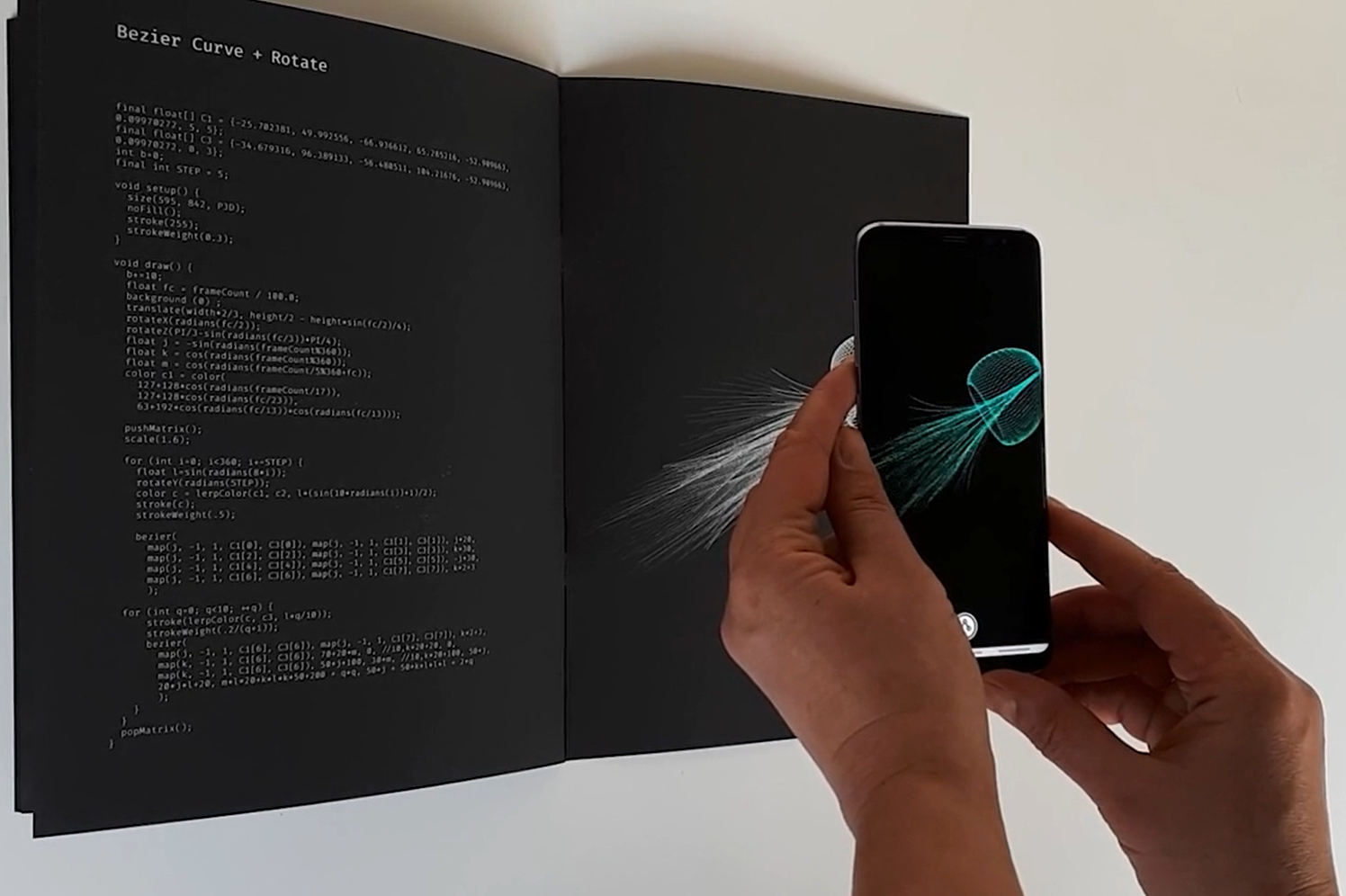

As a work piece, I would like to create an interactive science poster. Interactive science posters are an innovative approach to science communication, designed to make scientific information more engaging and accessible to a wider audience. These posters can include interactive data visualization, interactive storytelling, and gamification elements to help make complex scientific concepts more accessible and understandable. I got the inspiration for the work piece through the website of Ars Electronica, where I have found great examples for interactive science posters. You can look at them yourself here: https://ars.electronica.art/center/en/scicomlab/

The topic I would like to communicate is not decided yet. At first, I wanted to explain a new and very controversial method to save coral reefs. This method uses a cocktail of bacteria to strengthen the corals and make them more resistent. It is proven that this method is successful, but the downside is that it has not been tested how it will affect the ecosystem in general. But the disadvantage of this topic is that I may not find experts here in Graz, so it could be quite challenging doing research on this topic. But in a feedback round with Orhan Kipcak he mentioned the idea that I could approach different institutes at the FH or another university in Graz and directly ask them if there would be a need for such a poster. Through this, I would have direct contact to the scientists and actively work on a real project.

Next steps

This already brings me to my next steps I would like to make. Obviously I have to do a lot more research about the topic of interactive science communication. I would also like to consult with different science institutes to find out if there would be a possibility somewhere to base the interactive science poster on one of their research projects. What I also would like to do is get in contact with the institute of science communication on the Karl Franzens Universität and ask them if they could help me with my research.