Following the signal flow, in Pure Data the wristband sensor data gets read in and processed; and modulators and control variables get created and adjusted. Those variables are used in Ableton (Session View) to modulate and change the soundscape. This means that during the installation, Ableton is where sound gets played and synthesized, whereas Pure Data handles the sensor input and converts it into logical modulator variables that control the soundscape over short- and long-term.

While I separated the different structural parts of the software into own “modules”, I only have one Pure Data patch with various sub-patches, meaning that the separation into modules was mainly done for organizational reasons.

Data Preparation

The first module’s goal was to clean and process the raw sensor data in a way for it to become usable for the further process steps, which I spent a very substantial amount of time with during this semester. It was a big challenge to be working with analogue data that is subject to various physical influences like gravity, electronic inaccuracies and interferences and simple losses or transport problems between the different modules, but at the same time having a need for quite accurate responses on movements. Additionally, the rotation data includes a jump from 1 to 0 each full rotation (like it would jump from 360 to 0 degrees) which also needed to be translated into a smooth signal without jumps (converting to a sine was very helpful here). Another issue was that the bounds (0 and 1) were rarely fully reached, meaning I had to find a solution how I could reliably achieve the same results with different sensors at different times. I developed a principle of using the min/max values of the raw data and stretching that range to 0 to 1. This meant that after switching the system on, each sensor needs to be rotated in all directions for the Pure Data patch to “learn” its bounds.

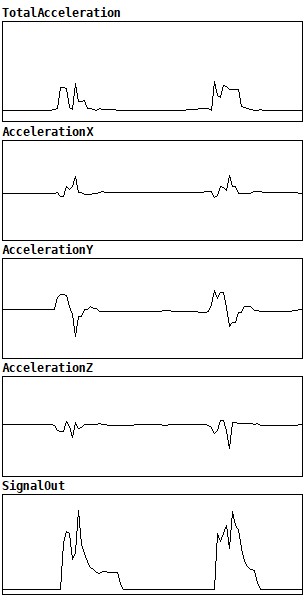

I don’t calculate how the sensor is currently oriented in space (which I possibly could do, using gravity) and I soon decided that there is no real advantage in using the acceleration values of the individual axis, but only the total acceleration (using Pythagorean triples). I process this total acceleration value further by detecting ramps, using the Pure Data threshold object. As of now, two different acceleration ramps are getting detected – one for hard and one for soft movements, where I defined a hard movement, like one would move a shaker or hit a drum with a stick, and the soft movement is rather activated continuously as soon as one’s hand moves through the air with a certain speed.

I originally imagined that it should be rather easy to let a percussion sound play on such a “hard movement”, however, I realized that such a hand movement is quite complex, and a lot of factors play a role in playing such a sound authentically. The peak in acceleration when stopping one’s hand is mostly bigger than the peak when starting a movement. But even using the stopping peak to trigger a sound, it doesn’t immediately sound authentic, since the band is mounted on one’s wrist and peaks at slightly different moments in its acceleration than one’s fingertip or fist might do.