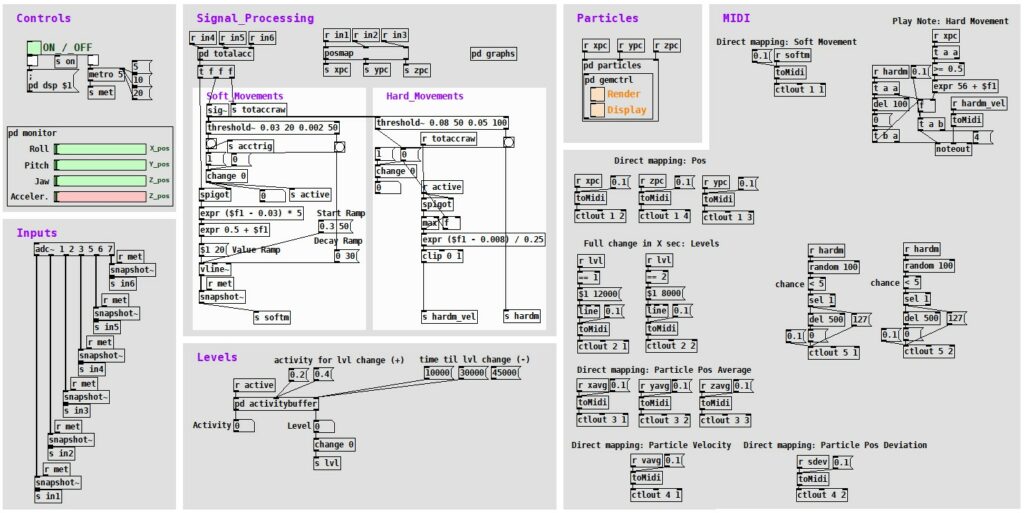

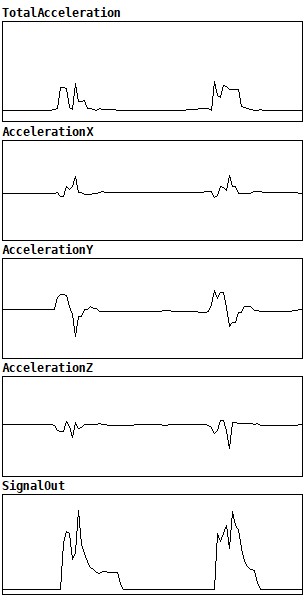

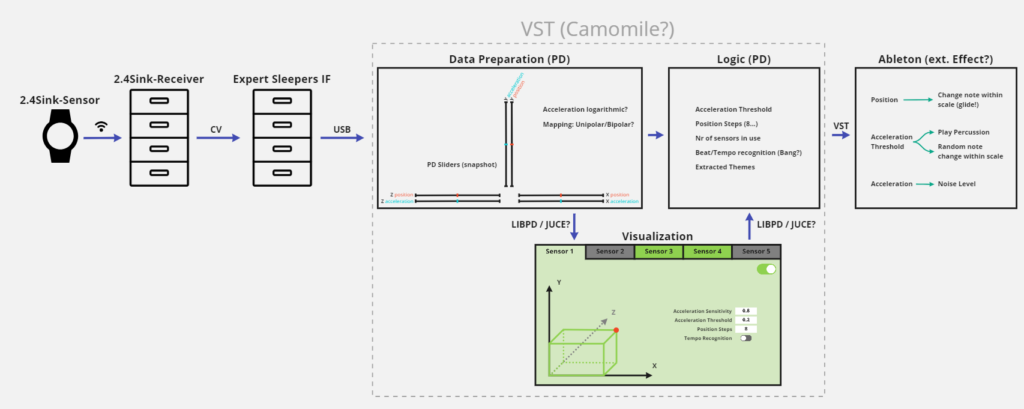

The logic module (still in the same Pure Data patch) is responsible for the direct control of the soundscape. This comprised translating the processed sensor data into useful variables that can be mapped to parameters and functions in Ableton, ultimately leading to an entertaining and dynamically changing composition that reacts to its listeners’ movements. Over the course of this semester, I wrote down various functions that I thought might be adding value to the soundscape development – but I soon realized that most of the functions and mappings just need to be tried out with music for me to be able to grasp how well they work. The core concepts I have currently integrated are the following:

Soft movements

Great to use for a direct feel of control over the system since each movement could produce a sound, when mapped to e.g., the sound level of a synth. An example might be something like a wind sound (swoosh) that gets louder the quicker one moves their hand through space.

Hard movements

Good for mapping percussive sounds, however, depending on one’s movements sometimes a little off and therefore distracting. Good use for indirect applications, like counting hard movements to trigger events, etc.

Random trigger

Using hard movements as an input variable, a good way to introduce movement-based but still stochastic variety to the soundscape, is to output a trigger by chance with each hard movement. This could mean that with each hard movement, there is a 5% chance that such a trigger is sent. This is a great way to change a playing midi clip or sample to another.

Direct rotation mappings

The rotation values of the watch can be mapped very well onto parameters or effects in a direct fashion.

Levels

A tool that I see as very important for a composition that truly has the means to match its listeners’ energy, is the introduction of different composition levels, based on the visitors’ activity. How such a level is changed is quite straight forward:

I am recording all soft movement activity (if a soft movement is currently active or not) into a 30 second ring buffer and calculate the percentage of activity within the ring buffer. If the activity percentage within 30 seconds crosses a threshold (e.g., around 40%), the next level is reached. If the activity level is below a threshold for long enough, the previous level is active again.

While by using all those mechanisms I could reach a certain level of complexity in my soundscape already, my supervisor inspired me to go a step further. It was true that all the direct mappings – even if modulated over time – had the risk to get very monotonous and boring over time. So, he gave me the idea of using particle systems inside my core logic to introduce more variation and create more interesting mapping variables.

Particle System

I found an existing Pure Data library that allows me to work with particle systems which is based on the Graphics Environment for Multimedia (GEM). This did however mean that I had to actually visualize everything I was calculating, but I figured out that at least for my development phase this was very helpful, since it is difficult to imagine how a particle movement might look just based on a table of numbers. I set the particles’ three-dimensional spawn area to move depending on the rotation of the three axes of the wristband. At the same time, I am also moving an orbital point (a point that particles are pulled towards and orbit it) the same way. This has the great effect that there is a second velocity system involved that is not dependent directly upon the acceleration of the wristband (it however is dependent on how quickly the wristband gets rotated). The actual parameters that I use in a mapping are all of statistical nature: I calculate the average X, Y and Z position of each particle, the average velocity of all particles and the dispersion from the average position. Using those averages in mappings introduces an inertia to the movements, which makes the compositions’ direct reactions to one’s motion more complex and a bit arbitrary.