| a short summary of a paper on human aspects related to automotive AR application design

A research paper, titled like this blog post, by experts from the Honda Research Institute (USA), the Stanford University (USA) and the Max-Planck-Institut für Informatik (Germany) [1] discusses benefits, challenges, their design approach and open questions regarding Augmented Reality in automotive context with a focus on the users.

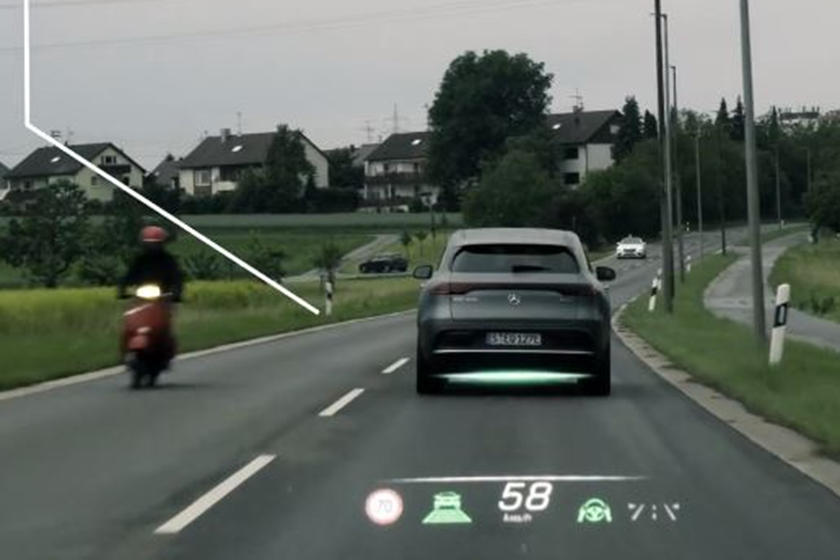

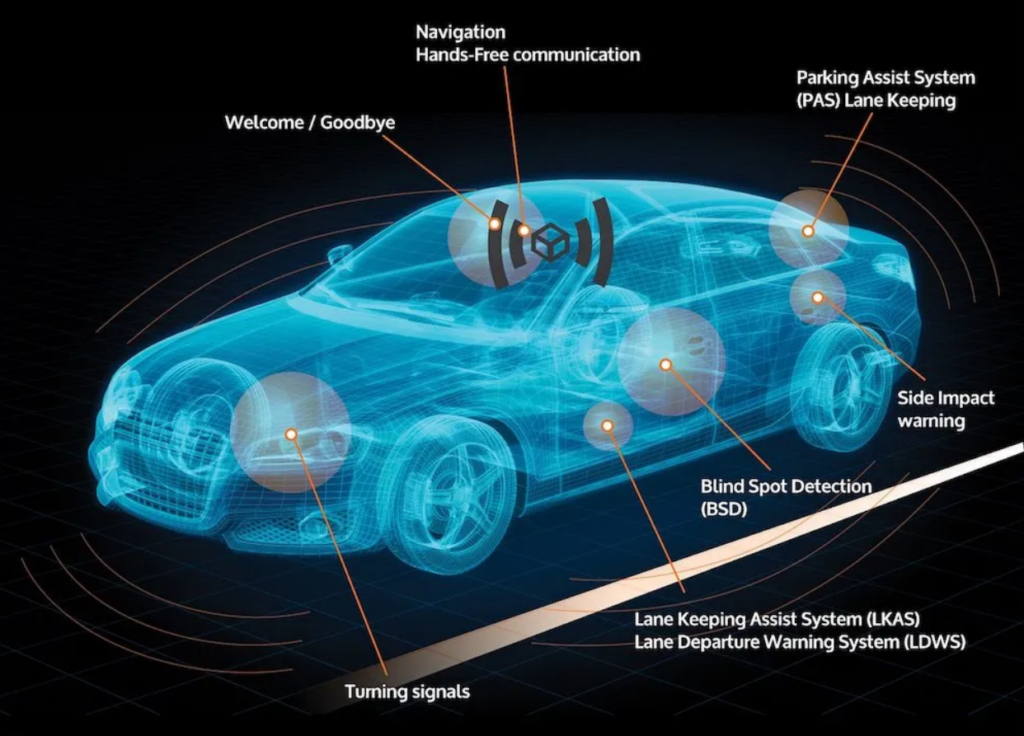

Augmented Reality can help drivers in pointig out important and potentially dangerous objects in the driver’s view and increase the driver’s situation awareness. Though if the information is presented incorrectly, the distraction and confusion of the driver can lead to dangerous situations.

The authors of the paper put up a design process with focusing on the appropriate form of solution to a driver’s problem (rather than just describing ideas technically).

To understand the drivers’ problems in the first place, they conducted in-car user interviews with different demographic groups to gather information about driving habits, concerns and the integration of driving into daily life.

After the interviews they ideated prototype solutions and tested concepts in a driving simulator with a HUD. One realization was that at a left turn, drivers needed more help in timing the turn according to oncoming traffic rather than an arrow or graphical aids for the turning path – which even distracted them from the oncoming traffic. The design solutions of the authors therefor focus on giving the driver additional cues to enhance awareness rather than giving only navigation commands. After researching different graphical styles of turning path indication, results showed less distraction with solid red path projection – that is visible in the peripheral vision while focusing on traffic – than simple chevron style lines.

Human visual perception

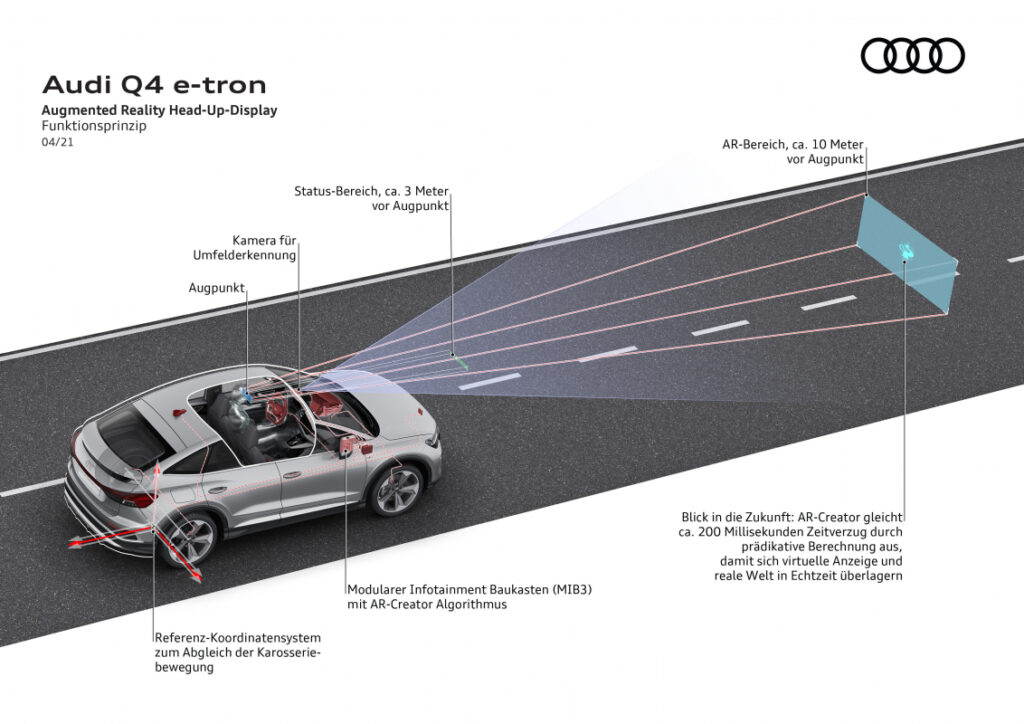

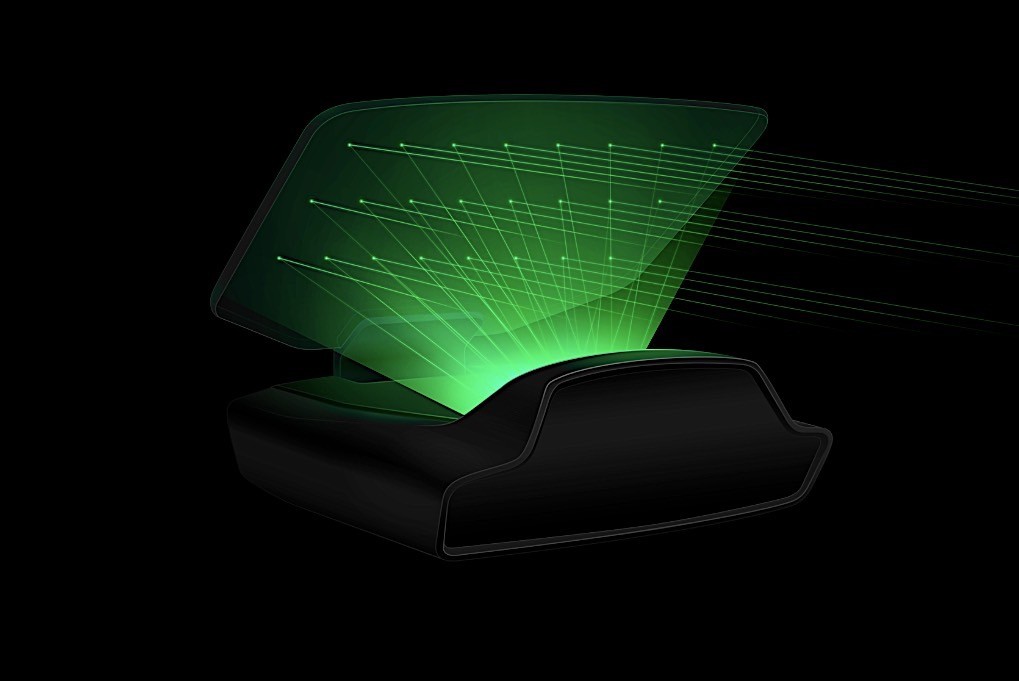

Regarding human perception, the authors of the paper analized influences of visual depth perception and the field of view. The human eye is built to focus on one distance at a time, so AR displays / Head Up Displays can cause a problem due to their see-through design. The driver’s focus has to remain on the road ahead and not change to the windshield’s distance, blurring out the farther imagery.

The eye’s foveal focus with the highest acuity is only at a ca. 2° center area of the vision field. This determins the so called “Useful Field Of View” (UFOV), the limited area from which information can be gained without head movement. These restrictions imply the use of augmented systems only in the driver’s main field of view, and not throughout the whole windshield. Objects in the peripheries should be therefor signalized either inside of the UFOV or through other methods.

Distraction

The National Highway Traffic Safety Administration (NHTSA) of the USA states three types of driver distraction:

- visual distraction (eyes off road)

- cognitive distraction (mind off driving)

- manual distraction (hands off the wheel)

Each of these types can be aided but also caused by Augmented Reality applications in vehicles.

The authors discussed the human attention system and cognitive dissonance problems further.

- Attention system

Regarding the human attention system, the so called “selective visual attention” and the “inattentional blindness” can be problems in driving conditions. Important visual cues can be suppressed when the driver is focusing on secondary tasks or if they are outside of the focus of attention. Warning signs on a HUD can either help by attracting attention, but also distract from other objects that are outside of the augmented field of view. The study states the need of further research on the balance between increasing attantion and avoiding unvanted distraction.

- Cognitive Dissonance

Cognitive dissonance, the perception of contradictory information, could occur e.g. with bad overlapping of 2D graphics on the 3D vision of the surroundings, causing confusion or misinterpretation of the visual clues.

Human behaviour

As a third category, the study discusses the effects of AR technology on human behaviour.

Situation awareness – maintaining state and future state information from the surroundings – is detailed by a source in three steps:

- Perception of elements in the environment

- Comprehension of their meaning

- Projection of future system states

Augmented Reality can help drivers not only in perception but also in the further steps. State-of-the-art computers, AI technology and connected car data from surroundings can be especially of help in cases where additional computational power can predict traffic dynamics. [comment of MSK]

One aspect is the behavioural change of drivers after longer use of assitance systems. A study implies that the reduced mental workload could lead to the retention of the drivers’ native skills. Further, the phenomena called “risk compensation” can occur after getting used to the aids. This means a riskier behaviour of the driver than normal, due to higher confidence in the surroundings. These behavioural changes can have dangerous consequences, why the authors suggest the use of driver aids only when needed.

According to one source, the user’s trust in a technology is can be increased with more realistic visual displays, like AR rather than simple map displays. Further, AR can also help to build trust in autonomous cars, communicating the system’s perception, plans and reasons for decision making.

Some open questions were stated at the end of the paper, to be considered further on.

Such were for example how multiple aiding systems can interact at the same time? Or how will the use of AR over longer time effect the drivers’ behaviour and skills when they have to switch back and drive a non-AR vehicle? Will the drivers’ skills deteriorate over and will they become dependent on these aiding systems?

My conclusion

This paper was published in 2013, since when the technology was significantly developed further. Nevertheless the basic principles and human factors are still the same, which have to be considered when designing safety critical automotive applications.

Reliability and understanding the behaviour of autonomous vehicles will be an essential aspect in creating acceptance by the driver / passengers. Augmented Reality can be of much help not only for extra driving assistance systems, but also for the complete user experience at different automation levels.

The mentioned topics of human factors in this paper were only focusing on visual augmentation and assistance. These could be expanded to other modalities like sound and haptic augmentation, and analyse the perception of a combined driver assistance as well.

Source

[1] Ng-Thow-Hing, Victor & Bark, Karlin & Beckwith, Lee & Tran, Cuong & Bhandari, Rishabh & Sridhar, Srinath. (2013). User-centered perspectives for automotive augmented reality. 2013 IEEE International Symposium on Mixed and Augmented Reality – Arts, Media, and Humanities, ISMAR-AMH 2013. 13-22. 10.1109/ISMAR-AMH.2013.6671262.

Retrieved on 30.01.2022. https://www.researchgate.net/publication/261447349_User-centered_perspectives_for_automotive_augmented_reality