Methods and findings of a multi-day performance research lab that evaluated the efficacy of a novel nerve sensor in the context of a physically inclusive performance practice.

by Lloyd May and Peter Larsson

Making the musical performance more accessible is something that many artists, such as Atau Tanaka and Laetitia Sonami, as well as scientists, have been aiming for a while. Therefore many efforts go toward the “nerve-sensor” direction. With this kind of approach, the detection of signals from nerve fring is more likely to happen rather than the skeletal muscle movement, so performers with physical conditions have more control over the sensors.

Even though the variety of gestures wasn’t as broad as other gestural instruments offer, the affordance of communication of gestural effort was better as proved in the explorations made on different sound-practices like free improvisation and the development of a piece called Frustentions.

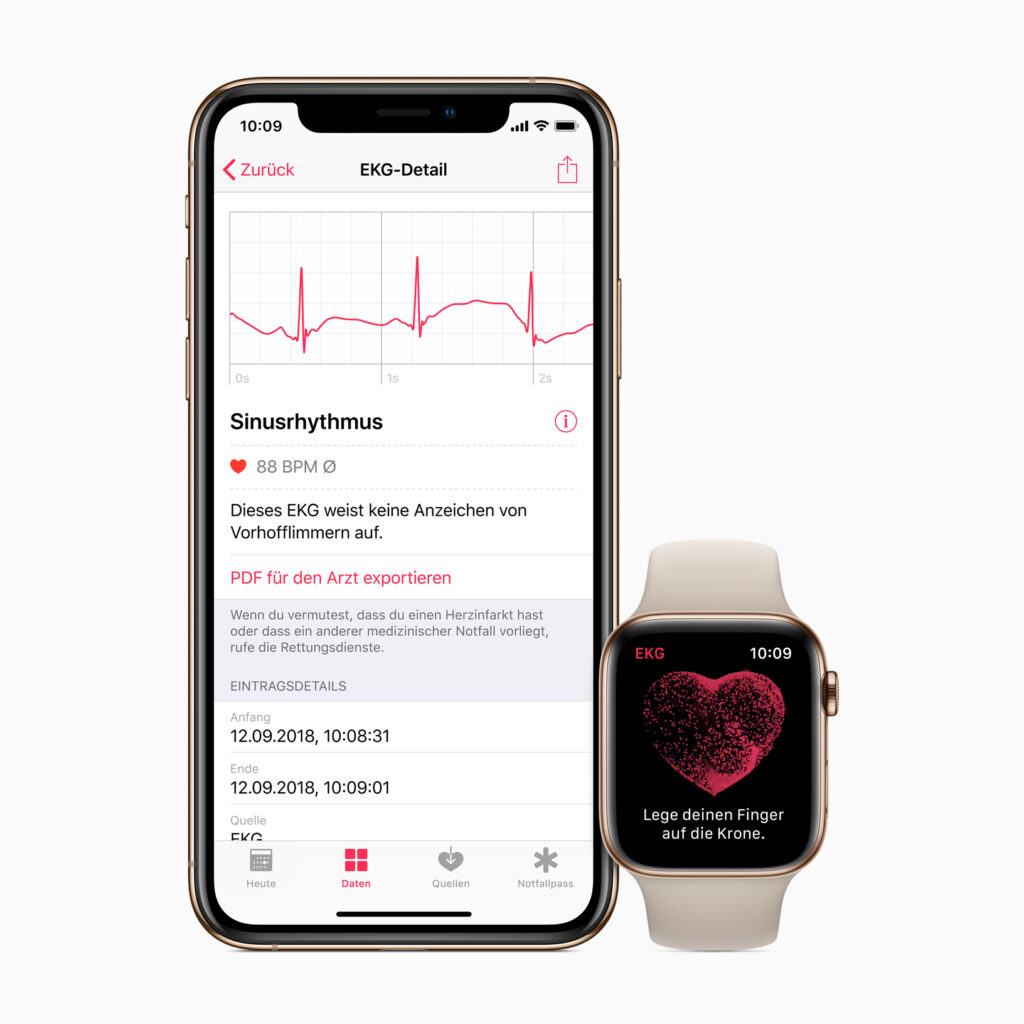

Thanks to the Electromyography, a technique used to measure the electrical activity of skeletal muscles and nerves through the use of non-invasive sensors placed directly on the skin, we have seen more and more people with muscle atrophy or compromised volitional control of skeletal muscles, having access to technologies, for example when it comes to gaming. But, as it usually happens, the broader the accessibility is the more potentially harmful lens can come with it. Therefore it is important to keep in mind that every individual is unique and be aware of the invisible boundaries that the technology can set around the people it’s supposed to serve.

The more people with different physical and mental abilities get involved in these sound-making explorations, the better and opener accessible the design of the interfaces will be.

For this specific exploration, there were 4 investigated parameters: sensor position, gesture types, minimal-movement gestures, as well as various sound-mapping parameters. The lab was structured into several sessions, each concluding with a performative exploration, as well as a structured public showcase and discussion at the end of the lab. Other research lines like minimal-movement “neural” gestures were also investigated but not much data could be gathered. The outcome of the session was the previous said composed piece: Frustentions. A fixed media composition developed during the workshop.

Three groups of gestures were determined during the sessions in order to record the needed data: Effort gestures, which were particularly suited to audio effects that are well-aligned to swelling such as distortion, delay, or reverb, and adjustment gestures, which often required full focus and were not necessarily accessible at all times during a performance; and trigger gestures.

The nerve sensor was compared with other interfaces like the MiMu glove, the Gestrument, and Soundbeam. Even though these other instruments allowed wider recognition of the number of gestures with better accuracy, it was more challenging to use with limited fine-motor capabilities. In addition, the wearable sensor afforded the performer greater opportunities to connect visually with ensemble members or the audience as there was no immediate requirement to view or interact directly with a screen.

Conclusions

Research aimed at making musical performance accessible to everyone is something that has multiple benefits, clearly on a physical level, but above all on a neural and psychological level. It is surprising how many things associated with leisure are out of reach for many people, simply because their physical condition does not meet the standards for which they are designed. The possibility that all these people can access activities of enjoyment represents a clear increase in the quality of life for them and for the people around them.

Nerve sensors are just one example, and thanks to this exploratory initiative we can get to know them and compare data with other instruments on the market. In more advanced stages of research, I would like to imagine that these interfaces are also used medically, to alleviate the effects of some diseases, improve physical conditions, and even reduce motor damage that originates in the brain by promoting nerve and muscle movement. Music is obviously a means of enjoyment, but together with science, it can be a means of healing.